Digital Fortress Europe #3: Automation and surveillance in Fortress Europe

Artificial intelligence and algorithms are at the heart of the EU’s new mobility-control system. High-risk automated decisions are being taken on human lives. It is an emerging multi-billion-euro unregulated market with dystopian 'smart' applications.

In late June 2020, Robert Williams, an African-American resident of Detroit, was arrested at the entrance of his home in front of his two young daughters. No one could tell him why. At the police station, he was informed that he was considered a suspect in the 2018 robbery of a store, as his face was identified by store-security surveillance footage. The identification was based on an old driver’s licence photo. After thirty hours in custody, Robert Williams was eventually released. The cynical confession of the Detroit police officers was disarming: “the computer probably made a mistake.”

A similar incident occurred in June 2019 to Michael Oliver, also an African-American Detroit resident, who was arrested after the alleged identification of his face on a security-camera video. He was taken to trial, where he was eventually acquitted three months after his arrest.

Similarly, in a test study of Amazon’s Rekognition software, the program incorrectly identified 28 members of Congress (!) as people who had previously been arrested for a crime. The misidentifications overwhelmingly involved blacks and Latinos. But do not assume that this only happens in the US.

As discussed in the previous two parts of MIIR’s research on “The Digital Walls of Fortress Europe”, the EU, as part of a new architecture of border surveillance and mobility control, has in recent years introduced a number of systems to record and monitor citizens moving around the European space. The EU is using different funding mechanisms for research and development, with an increasing emphasis on artificial intelligence (AI) technologies, which can also use biometric data. Between 2007 and 2013 (but with projects running until 2020) the most relevant of these was the Seventh Framework Programme (FP7), followed by Horizon 2020. These two programmes have funded EU security projects worth more than €1.3 billion. For the current period 2021-2027, Horizon Europe has a total budget of €95.5 billion, with a particular focus on ‘security’ issues. Technologies such as automated decision-making, biometrics, thermal cameras and drones are increasingly controlling migration and affecting millions of people on the move. Border management has become a profitable multi-billion-euro business in the EU and other parts of the world. According to an analysis by TNI (Border War Series), the annual growth of the border-security market is expected to be between 7.2 % and 8.6 %, reaching a total of USD 65-68 billion by 2025.

The largest expansion is in the global Biometric Data and Artificial Intelligence (AI) markets. The biometrics market itself is projected to double its turnover from $33 billion in 2019 to $65.3 billion by 2024. A significant part of the funding is directed towards enhancing the capabilities of EU-LISA (European Agency for the Operational Management of Large Scale IT Systems in the Area of Freedom, Security and Justice) which is expected to play a key role in managing the interoperability of databases for mobility and security control. The activities of this supercomputer are funded by:

- a grant from the general budget of the EU.

- A contribution from the member states related to the operation of the Schengen area and Eurodac related measures.

- direct financial contributions from member states.

Chris Jones, Executive Director of the non-profit organisation Statewatch, has been following the money trail starting in Brussels for several years. He explains that “EU-research projects are usually run by consortia of private companies, public bodies and universities. Private companies receive the largest sums, more than public bodies.” A recent Statewatch study (Funds for Fortress Europe: spending by Frontex and EU-LISA, January 2022) highlights that around €1.5 billion was directed to private contractors for the development and strengthening of EU-LISA in the period 2014-2020, with the largest increase occurring after 2017 and the peak of the refugee crisis.

The surveillance oligopoly

One of the most important contracts signed in 2020, worth €300 million, was between French companies Idemia and Sopra Steria for the implementation of a new Biometric Matching System (BMS). These companies often win new contracts as they have agreements for the maintenance of the EES, EURODAC, SIS II and VIS systems. Other companies that have been awarded high-value contracts for EU-LISA-related work are Atos, IBM, and Leonardo – for €140 million – and the consortium Atos, Accenture and Morpho (later Idemia) which in 2016 signed a contract worth €194 million. Data collected by Statewatch also shows cooperation – usually through joint ventures – in the expansion of the EU-LISA system with companies of Greek interests, such as Unisystems SA (owned by the Quest Group of former President of the Association of Greek Industrialists Th. Fessa), which signed a €45 million contract in 2019. Similarly, European Dynamics SA (owned by Konstantinos Velentzas) participated in a €187 million contract awarded in 2020, and Luxembourg-based Intrasoft International SA (previously owned by Kokkalis interests) is participating with five other companies in a €187 million project in 2020.

EU-LISA’s relationship with industry is also illustrated by the frequent holding of joint events, such as the “roundtable with industry” to be held on 16 June 2022 in Strasbourg. This will be the 15th consecutive such meeting and will bring together EU bodies, representatives of mobility management systems, and individuals. “There are extensive, long and very secret negotiations between member states and MEPs whenever they want to change something in the databases. But we don’t know what the real influence of the companies running these systems is, whether they are assisting in what is technically feasible and how all this interacts with the political process,” says Statewatch’s Chris Jones. The content of the contracts signed between the consortia and EU-LISA also remains unknown, as it is not published.

The new frontier of AI and the pressures on the EU

In April 2021, the European Commission published its long-awaited draft regulation on artificial intelligence (AI ACT). The consultation process is expected to take some time. This important piece of legislation exceeds 200 pages and which will be – among other things – a refinement of the data protection legislation (Directive 680/2016). There is expected to be considerable pressure exerted by companies and operators in the sector until the bill is submitted in its final form to the European Parliament.

MIIR has investigated the records of official meetings on AI and digital policy issues between European Commission President Ursula von der Leyen, Commissioner Margrethe Vestager (“A Europe Fit for the Digital Age”), Commissioner Thierry Breton (Internal Market) and their staffs between December 2019 and March 2022. It emerges that at least 14 agencies, private sector giants and consortia of companies related to the security and defence sector met with key representatives of the European Commission 71 times in 28 months to discuss issues related to digital policy and AI. Most meetings with the Commissioners were held by DIGITALEUROPE, an organisation representing 78 corporate members, including major defence and security companies such as Accenture, Airbus and Atos. Other consortia were also identified to be lobbying heavily, such as the European Round Table for Industries (ERT) which represents a number of defence and security companies such as Leonardo, Rolls-Royce and Airbus.

High-risk systems

The proposal for the European regulation (COM/2021/206 final ) adopted in April 2021, gives a good overview of the AI systems and applications that are expected to be regulated, and the risks of their unregulated operation at Europe’s entry points. As stated: “[…] it is appropriate to classify as high-risk AI systems intended to be used by the competent public authorities responsible for tasks in the areas of immigration management, asylum and border control as polygraphs and similar tools or for detecting the emotional state of an individual; for assessing certain risks presented by natural persons entering the territory of a member state or applying for a visa; for assessing certain risks presented by natural persons entering the territory of a member state or applying for a visa; for assessing the risk of a person’s personal data […]”

The critical parameter

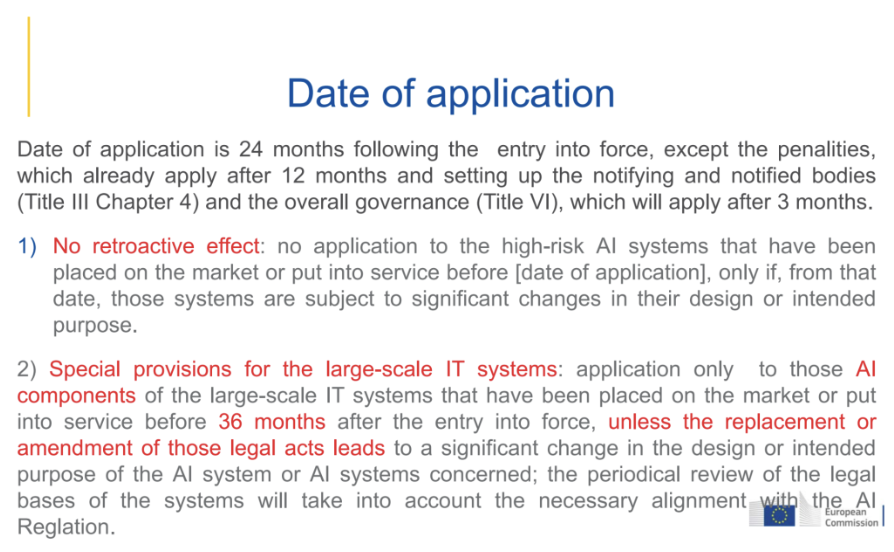

The scope of the field where ‘high-risk’ AI systems can be applied seems wide. Despite hopes that a new directive will regulate how they operate, there is one parameter that may remove this possibility. As revealed in an internal presentation by the European Commission’s internal review that took place in May and was brought to light by Statewatch, the new regulation, if passed, will come into force 24 months after it is signed and will not apply to all systems, as it is not expected to be retroactive to those on the market before the effective date.

“It’s like he’s clearly saying, ‘yes, we should control the use of artificial intelligence and machine learning in a responsible way. But we won’t do it for the systems we’re already building because… we have other ideas for them…’,” comments Chris Jones. The issue is also addressed in the joint statement issued under the auspices of the EDRI digital rights network in November by 114 civil society organisations, highlighting that “no reasonable justification for this exemption from the AI regulation is included in the bill or provided”. In the Communication, they call on the Council of Europe, the European Parliament and member state governments to include in the final bill safeguards for accountability that will guarantee a secure framework for the implementation of AI systems and, most importantly, the protection of the fundamental rights of European citizens.

Robo-dogs in action: Algorithms and nightmarish research projects

“There is a great effort by EU institutions and member states to increase the number of deportations. The EU has poured money and resources and these databases to essentially say ‘we want to help remove these people from European soil’,” Statewatch’s Chris Jones points out. Indeed, automation and the use of industry-pushed algorithmic tools are already playing an important role at Europe’s entry points, raising many questions about safeguarding the rights of refugees and migrants. It is not only the profiling that worries those who criticise these EU projects, but also the quality of the data on which this process is based. “It looks like a ‘black box’, where we don’t know exactly what’s inside,” says refugee law specialist and anthropologist Petra Molnar, who focuses on the risk of automation without a human factor in decision-making when it determines human lives.

Some of the major pilot systems funded in the past few years include the following:

iBorderCtrl – “smart” lie detectors: Combines facial matching and document authentication tools with AI technologies. It is a “lie detector”, tested in Hungary, Greece and Latvia, and involved the use of a “virtual border guard”, personalised for the gender, nationality and language of the traveller – a guard asking questions via a digital camera. The project was funded with €4.5 million from the European Union’s Horizon 2020 programme, and has been heavily criticised as dangerous and pseudo-scientific (“Sci-fi surveillance: Europe’s secretive push into biometric technology”, The Guardian, 10 December 2020; “We Tested Europe’s New Lie Detector for Travelers – and Immediately Triggered a False Positive”, The Intercept, 26 July 2019).

It was piloted under simulated conditions in early July 2019 at the premises of TRAINOSE in a specially designed area of the Security Studies Centre in Athens. Before departure the traveller had to upload a photo of an ID or passport to a special application. They then answered questions posed by a virtual border guard. Special software recorded their words and facial movements, which might have escaped the attention of an ordinary eye, and in the end the software calculated – supposedly – the traveller’s degree of sincerity.

On 2 February 2021, the European Court of Justice ruled on a lawsuit brought by MEP and activist Patrick Breyer (Pirate Party) against the privacy of this research project, which he called pseudo-scientific and Orwellian.

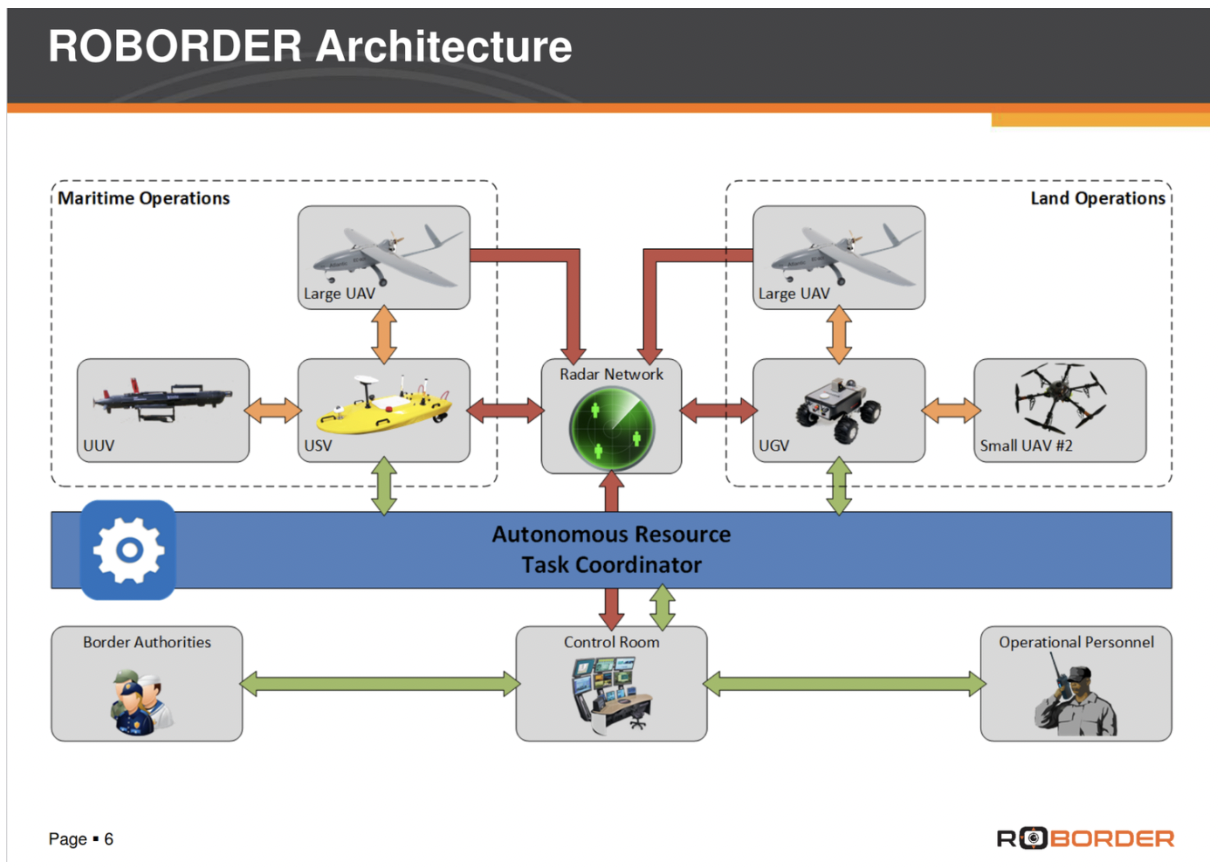

Roborder (an autonomous swarm of heterogeneous robots for border surveillance): This aims to develop an autonomous border surveillance system using unmanned robots including aerial, maritime, submarine and ground vehicles. The whole robotic platform integrates multimodal sensors in a single interoperable network. From 28 June to 1 July 2021, the final pilot test of the project, in which the Greek Ministry of National Defence is participating, took place in Greece.

Foldout: The €8.1 million Foldout research project does not hide its aims: “in recent years irregular migration has increased dramatically and is no longer manageable with existing systems”. The main idea of the project, piloted in Bulgaria and being rolled out in Finland, Greece and French Guinea, is to place motion sensors on land sections of the border where terrain or vegetation makes it difficult to detect an irregular crossing. With any suspicious movement, human or vehicle, there will be the possibility of sending a drone to that point or activating ground cameras for additional monitoring. The consortium developing it is coordinated by the Austrian Institute of Technology (which has received €25 million from 37 European projects).

Among the organisations lobbying for these projects at the European level, we met EARTO, a consortium of research centres and project beneficiaries in various fields, including security. These included KEMEA in Greece, the Fraunhofer-Gesellschaft (140 EU-funded research projects, including Roborder) and the Austrian Institute of Technology (Foldout).

Many of the Horizon 2020 research projects (Roborder, iBorderCtrl, Foldout, Trespass, etc.) have been described by their own authors as still “immature” for widespread use. However, the overall shift in the European Union’s approach to the use of AI for mobility control and crime prevention can be seen in the ever-increasing funding of the European Security Fund. One such project is the supply of thousands of mobile devices by the Greek police that will allow citizens to be identified using facial recognition and fingerprinting software. The total cost of the project, undertaken by Intracom Telecom, exceeds €4 million and 75% comes from the European Security Fund.

The Samos “experiment”

“Borders and immigration are the perfect laboratory for experiments. Opaque, high-risk conditions with low levels of accountability. Borders are becoming the perfect testing ground for new technologies that can later be used more extensively on different communities and populations. This is exactly what you see in Greece, right?”, asks lawyer Petra Molnar. The answer is in the affirmative, both for the north and the south of the country.

On the island of Samos on Greece’s south-eastern border with Turkey, at the new migrant camp which the Greek government is almost advertising, two special pilot systems called HYPERION and CENTAUR are being put into operation.

HYPERION is an asylum management system for all the needs of the Reception and Identification Service. It processes biometric and biographical data of asylum seekers, as well as of the members of NGOs visiting the relevant structures and of the workers in these structures. It is planned to be the main tool for the operation of the Closed Reception Centres (CRCs) as it will be responsible for access control, monitoring of benefits per asylum seeker using an individual card (food, clothing supplies, etc.) and movements between the CRCs, and accommodation facilities. The project includes the creation of a mobile phone application that will provide personalised information to the user, to act as their electronic mailbox regarding their asylum application process, with the ability to provide personalised information.

CENTAUR is a digital system for the management of electronic and physical security around and within the premises, using cameras and AI behavioural analytics algorithms. It includes centralised management from the Ministry of Digital Governance and services such as : signalling perimeter breach alarms using cameras (capable of thermometry, focus and rotation) and motion analysis algorithms; signalling of illegal behaviour alarms for individuals or groups of individuals in assembly areas inside the facility; and use of unmanned aircraft systems to assess incidents inside the facility without human intervention.

“CENTAUR uses cameras that have a great ability to focus on specific individuals, cameras that can also take someone’s temperature. The most important thing is not that CENTAUR will use this image for security reasons, it is that behavioural analysis algorithms will also be used, without explaining exactly what it means,” says lawyer and member of Homo Digitalis, Kostas Kakavoulis. As he points out, “an algorithm learns to come to certain conclusions based on some data we have given it. Such an algorithm will be able to distinguish between the fact that person X may have increased aggressive behaviour, and may attack other asylum seekers or guards, or may want to escape from the accommodation facility illegally. Another use of behaviour analysis algorithms is lie analysis, which can judge whether our behaviour and our words reflect something that is true or not. This is mainly done through the analysis of biometric data, the data that we all produce through our movement in space, through our physical presence, through our physical appearance and also the way we move our hands, the way we blink, the way we walk, for example. All these may seem insignificant, but if someone can collect them over a long period of time and can correlate them with the data of many other people, they may be able to come to conclusions about us, which may surprise us, about how aggressive our behaviour can be, how much anxiety we have, how afraid we are, whether we are telling the truth or not.” In the current legislation, it is prohibited to process personal data without the possibility of human intervention.

Lawyer Petra Molnar has recently been researching the effects of AI applications on the control of migration flows. She was in Samos at the opening of the new closed reception centre. “Multiple layers of barbed wire, cameras everywhere, fingerprint stations at the rotating gate, entry-exit points. Refugees see it as a prison complex. I will never forget that. On the eve of the opening I was at the old camp in Vathi, Samos. We talked to a young mother from Afghanistan. She was pushing her young daughter in a pram and hurriedly typed a message on her phone that said: ‘If we go there, we’ll go crazy’. And every time I look at the camps with these systems, I realise that it embodies that fear that people have when they’re going to be isolated, and surveillance technologies are used to further control their movements.”

Médecins Sans Frontières described the new structure in Samos as a “dystopian nightmare”. They were not alone. “The CENTAUR system is framed by the use of highly intrusive technologies to protect privacy, personal data as well as other rights such as behavioural and motion analysis algorithms, drones and closed circuit surveillance cameras. There is a serious possibility that the installation of the HYPERION and CENTAUR systems may violate the European Union legislation on the processing of personal data and the provisions of Law 4624/2019”, the NGO Homo Digitalis points out. The Hellenic Human Rights Association, HIAS Greece, Homo Digitalis and a Lecturer at Queen Mary University of London Dr Niovi Vavoula filed a request before the Greek Data Protection Authority (DPA) on 18 February 2022 for the exercise of investigative powers and the issuance of an Opinion on the supply and installation of the systems. On Wednesday 2 March 2022, the Authority commenced an investigation of the Department of Immigration and Asylum in relation to the two systems in question.

The automation fetish

“The problem is that authorities, and politicians, are beginning to perceive advanced data analytics as factors in some kind of objective and unbiased knowledge about security issues, because they have this aura of mathematical precision. But artificial intelligence and machine learning can actually be very accurate in reproducing and magnifying the biases of the past. We should remember that poor quality data will only lead to bad automated, biased decisions,” says researcher Georgios Glouftsios.

We wonder why we use robot dogs, sound cannons and lie detectors at our borders but do not use AI to weed out, for example, racist border guards.

Flash forward. In 2054 the Washington DC police department has created a special pre-crime police team that arrests crime suspects before they even commit the crime. The predictions are made by three mutant human beings, who are in a state of permanent hypnosis and are able to see the future, including the potential criminal, before he or she even goes through with the act. It is a stretch – for the moment – to claim that we are approaching the fantasy of Philip Dick in Minority Report. But what is not far off is the existence of various systems of behavioural analysis including lie detectors, facial and emotional recognition software, with automated decision-making on the horizon. All this – in a context of militarisation of the EU’s external borders, in a context of treating people on the move as a potential threat – risks creating a dangerous human laboratory, a high-risk experiment around fundamental human rights.

https://miir.gr/en/automation-and-surveillance-in-fortress-europe/

This article has been produced within the Panelfit project , supported by the Horizon 2020 program of the European Commission (grant agreement n. 788039). The Commission did not take part in the production of the article and is not responsible for its content. The article is part of the independent journalistic production of EDJNet.